Mind Palace XR

OCAD U Open Research Repository: https://openresearch.ocadu.ca/id/eprint/4639

The Idea

Inspired by the ancient "Method of Loci" mnemonic technique and motivated by the challenge of human forgetting, Mind Palace XR explores how Artificial Intelligence (AI) and Augmented Reality (AR) can be integrated to enhance memory. This innovative Unity-based application aims to transform the user's real-world surroundings into an interactive, personalized memory palace. By capturing the environment via a device camera, the system employs a multi-stage AI pipeline—using Google Gemini for scene understanding, FalAI for 2D artistic interpretation, and StabilityAI for 3D model generation—to create tangible 3D memory cues. These 3D models are then anchored within the user's augmented space, allowing them to leverage spatial memory principles for improved information recall and creating a dynamic, world-scale repository for memories

Development

Mind Palace XR was developed as the final prototype culminating from an iterative research-through-design process involving 15 distinct prototypes exploring related concepts. The core development involved:

- Platform: Built using the Unity engine for AR deployment.

- Input: Utilizes real-time video feed from the device's camera (tested with Meta Quest 3 HMD) to capture the user's environment (README Overview).

- AI Pipeline: Implemented a sequential workflow connecting multiple AI APIs:

- The captured image is sent to Google's Gemini Vision API for detailed scene description generation.

- The text description is passed to the FalAI API to generate a stylized 2D image.

- The 2D image is then converted into a 3D model using StabilityAI's 3D generation API.

- AR Integration: Generated 3D models (in glTF format) are loaded and displayed in the user's AR space using the GLTFast package in Unity.

- Features: Includes UI controls for initiating capture, provides feedback during processing, and offers local storage for the generated 3D memory objects with timestamps (README Features, Workflow).

- Assistance: AI coding assistants (OpenAI, Anthropic models) were used during code implementation and refinement.

Reflection

As a capstone thesis prototype, Mind Palace XR successfully demonstrated the technical feasibility of integrating complex, multi-modal AI services (vision-to-text, text-to-image, image-to-3D) within a real-time AR application to explore futuristic memory augmentation concepts. The project tangibly realised the idea of creating automated, spatially-anchored 3D memory cues inspired by the Method of Loci, showcasing a novel application of AI and AR. Public exhibition at DFX 2025 across five days generated consistent, positive responses from a diverse audience: including AR practitioners, researchers, students, and general visitors. It also surfaced a range of real-world applications that had not been anticipated during development. As a speculative piece developed through research-through-design, the work prioritised technical integration and conceptual validation over controlled usability testing or the refinement of aesthetic and emotional dimensions; these remain active directions for future development.

What Worked

- Successful real-time integration of a multi-step pipeline involving camera capture, diverse AI APIs (Gemini, FalAI, StabilityAI), and AR rendering in Unity.

- Demonstrated the core concept of automatically generating and placing 3D memory representations in the user's augmented environment based on real-world scenes.

- Served as an effective proof-of-concept for speculative research exploring AI/AR augmentation of mnemonic techniques.

- The interaction design, particularly the grab mechanic and floating navigation window, was independently validated by exhibition visitors as smooth, intuitive, and effective.

- The concept of spatial association was immediately legible to visitors without explanation: multiple people made the connection between 3D anchor placement and memory recall unprompted.

- Exhibition feedback surfaced concrete, unsolicited use cases across diverse contexts, cognitive support for the elderly, task reminders in everyday environments, creative workflow assistance, and wayfinding, indicating the concept translates well beyond its academic framing.

- The human-agency design principle, keeping the user in control while delegating generative tasks to AI, was noticed and valued by visitors, suggesting the ethical framing of the project is perceptible at the interaction level.

What Did Not Work / Limitations

- No controlled empirical study was conducted to validate memory enhancement claims. The exhibition provided qualitative evidence of engagement and perceived utility, but systematic measurement of actual memory improvement remains for future work.

- Responses were collected during live exhibition rather than through structured user research, enthusiastic but not analytically comparable across participants.

- Performance, latency, cost, and output consistency are dependent on third-party AI APIs, introducing variability outside developer control.

- The technical focus of the prototype limited deeper exploration of user experience nuances, aesthetic control, and the emotional dimensions of memory, aspects visitors occasionally noted, including the reflective quality of 3D textures and the nostalgic character of 2D visuals.

- Exhibition feedback surfaced open questions that require further development: how 3D model placement scales outdoors and around large structures; whether placed anchors persist reliably across sessions; and how the system performs offline or in low-connectivity environments.

- Planned improvements - conversational AI integration, VR support, local on-device AI, and accessibility features for vision-impaired users — remain outside the current prototype's scope.

Public Reception

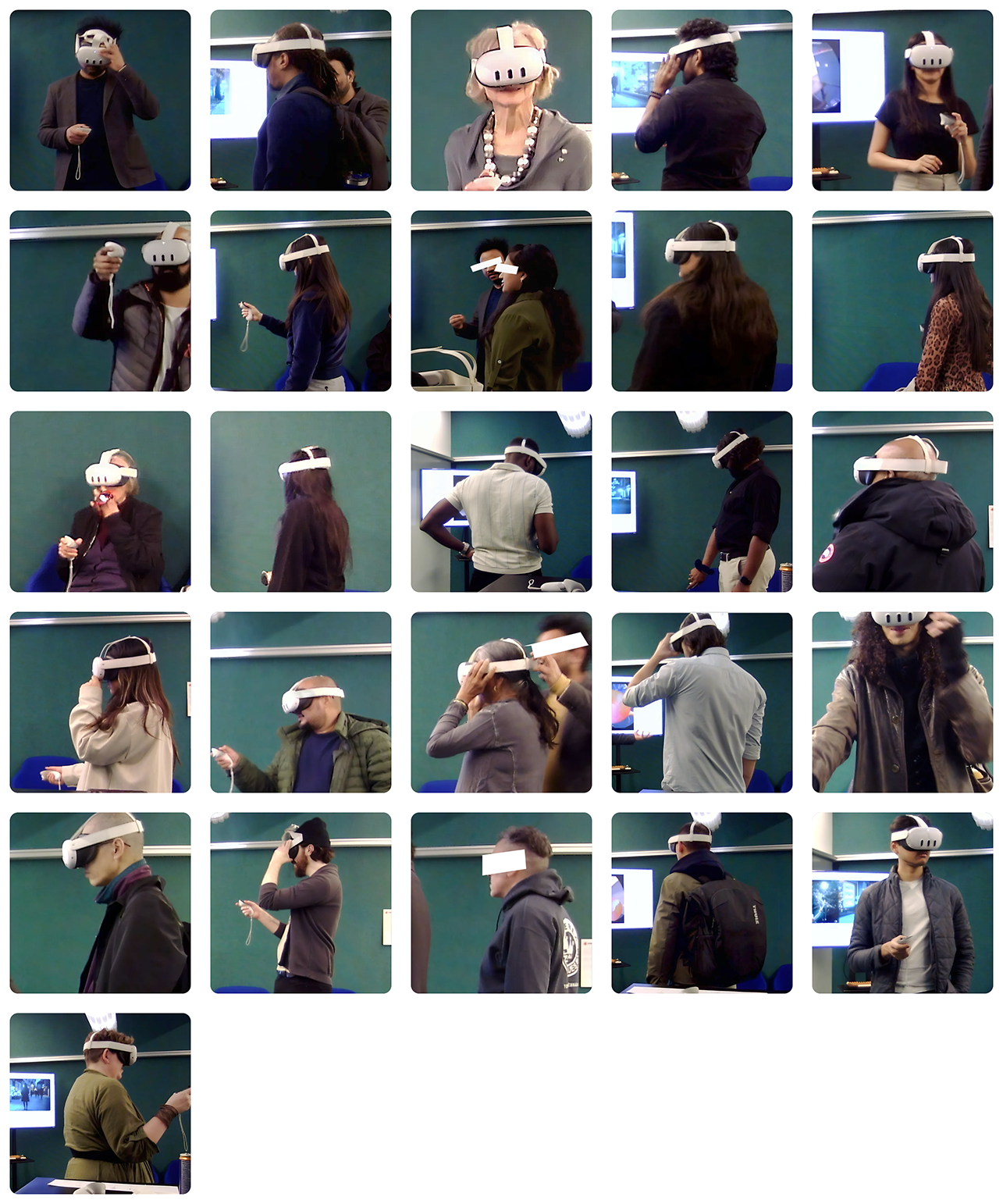

Mind Palace XR was exhibited over five days as part of the OCAD University graduate showcase. Visitors interacted with the prototype live, experiencing the full AI pipeline in real time through a Meta Quest 3 headset. The responses below were collected directly during the exhibition and reflect unscripted, first-hand reactions to the system.

Photo Gallery

Curated Quotes

Quote 1 — Technical credibility - "This is incredibly impressive. The way the app seamlessly connects different AI models to deliver results within seconds is truly mind-blowing and showcases the power of advanced technology in a remarkably smooth and efficient way."

Quote 2 — UX and future potential - "It's fascinating to see how the app explores the concept of association — how we link a 3D shape to a specific event or location. It holds a lot of potential for everyday use, especially as smart glasses become thinner and more comfortable to wear."

Quote 3 — From a practitioner (highest credibility) - "As an AR artist who has worked with Adobe Aero, I find the integration of AI particularly fascinating — especially in how it helps reduce cognitive load during the creative process. It's exciting to see large language models, image generation models, and 3D generation models coming together in such an innovative way."

Quote 4 — Solves a real problem - "We often take photos and screenshots with the intention of revisiting them, but we tend to forget or never get around to actually opening them. The way this app integrates 3D models into our natural walking experience in AR — appearing seamlessly without any need to search or query — makes the interaction effortless and intuitive."

Quote 5 — Concrete real-world use case - "This app is impressive and shows great potential, especially for helping the elderly. I often forget which clerk I spoke to at the bank, and this app could mark the location of the clerk I usually go to. It would also be very useful while grocery shopping — the floating 3D markers could serve as helpful reminders of what I need to pick up."

Quote 6 — Human agency framing - "It's great to see that the app emphasises keeping agency with humans — ensuring that people remain in control. I also appreciate the thoughtful approach to balancing what humans need with what AI excels at, which creates a more intuitive and effective experience."

Summary

Across five days, visitors consistently noted the novelty of the experience and the system's speed, the ability to generate 3D memory anchors in real time was frequently described as impressive. The interaction design received positive attention: the grab mechanic, the floating navigation window, and the frictionless AR placement were all highlighted as smooth and intuitive. Visitors from creative and technical backgrounds saw immediate applications, from reducing cognitive load in creative work to supporting memory in everyday contexts like shopping and social navigation. Recurring questions about outdoor scale, model persistence, and offline access have directly shaped the project's next-steps agenda.